Model Context Protocol

Connect any MCP-compatible agent runtime to the AI Front Door MCP server. Expose tools and knowledge resources directly inside your LLM context.

Register your app, grab your token, and connect your agents to trusted tools, MCP servers, and knowledge resources.

AI Front Door exposes its tools, MCP servers, and knowledge resources as a platform that any developer can integrate into their own agents, services, and workflows. This page covers everything you need to get started — from registering your app and obtaining a token, through to running your first agent with the Exco Partners CoreMethods MCP server.

All API access is authenticated with a bearer token. Tokens are scoped to an application registration and carry the permissions needed to call specific tools and knowledge endpoints.

Choose the integration style that fits your use case. All options use the same bearer token for authentication.

Connect any MCP-compatible agent runtime to the AI Front Door MCP server. Expose tools and knowledge resources directly inside your LLM context.

Call tools and retrieve knowledge via standard JSON REST endpoints. Ideal for backend services, orchestrators, and non-MCP agent frameworks.

Allow your agent to delegate tasks to the AI Front Door domain agent using the A2A protocol. Useful for multi-agent pipelines and escalation flows.

Retrieve structured knowledge items, guidance, policy, and reference data — either as MCP resources or via REST — to ground your agent's responses.

Before you can call any AI Front Door API you must register an application. Registration gives you a api key and lets you access specific tools and knowledge resources.

1. Sign in to the AI Front Door portal.

2. Navigate to Settings → Developer Apps → “Register New App”.

3. Give your app a name, select the tools and knowledge resources it needs access to, and save.

4. Copy the generated api key to use in your agents apps.

To access tools and resources, you now need to include the Authorization header for every request, eg/

curl -X GET https://api.example.com/endpoint \

-H "Authorization: Bearer exJhbGciOiJSUz324sdfInR5cCI6IkpXVCJ9..."

You can use an AI Front Door MCP server to get access to registered tools and knowledge resources for any MCP-compatible agent runtime. The server URL and transport details are shown on your app registration page for each tool.

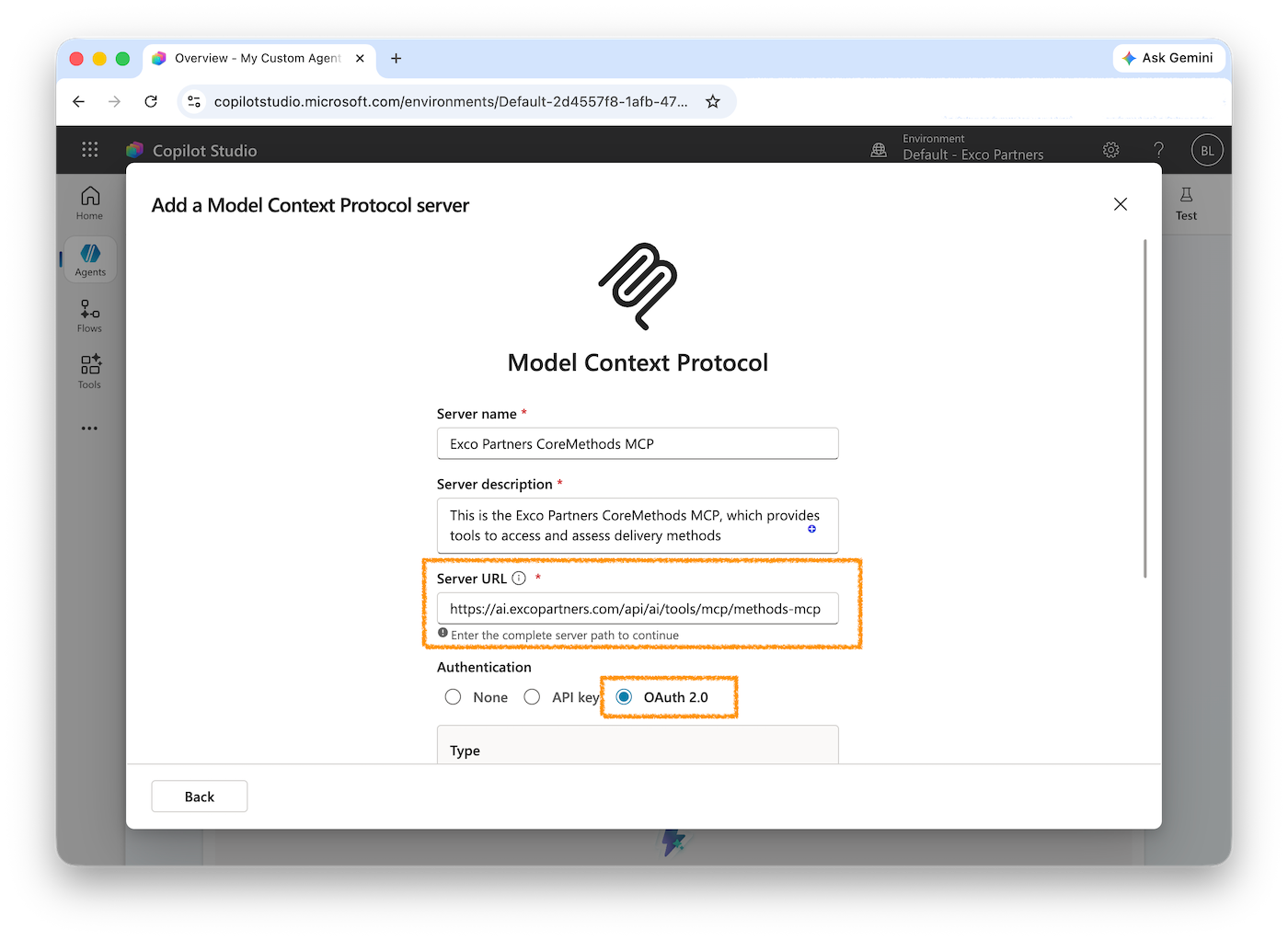

For an MCP, the following details are the most relevant:

• Transport: eg. HTTP (Streamable HTTP transport)

• Server URL: eg. https://ai.excopartners/api/ai/tools/mcp/methods-mcp

You will also need the API Key as described above.

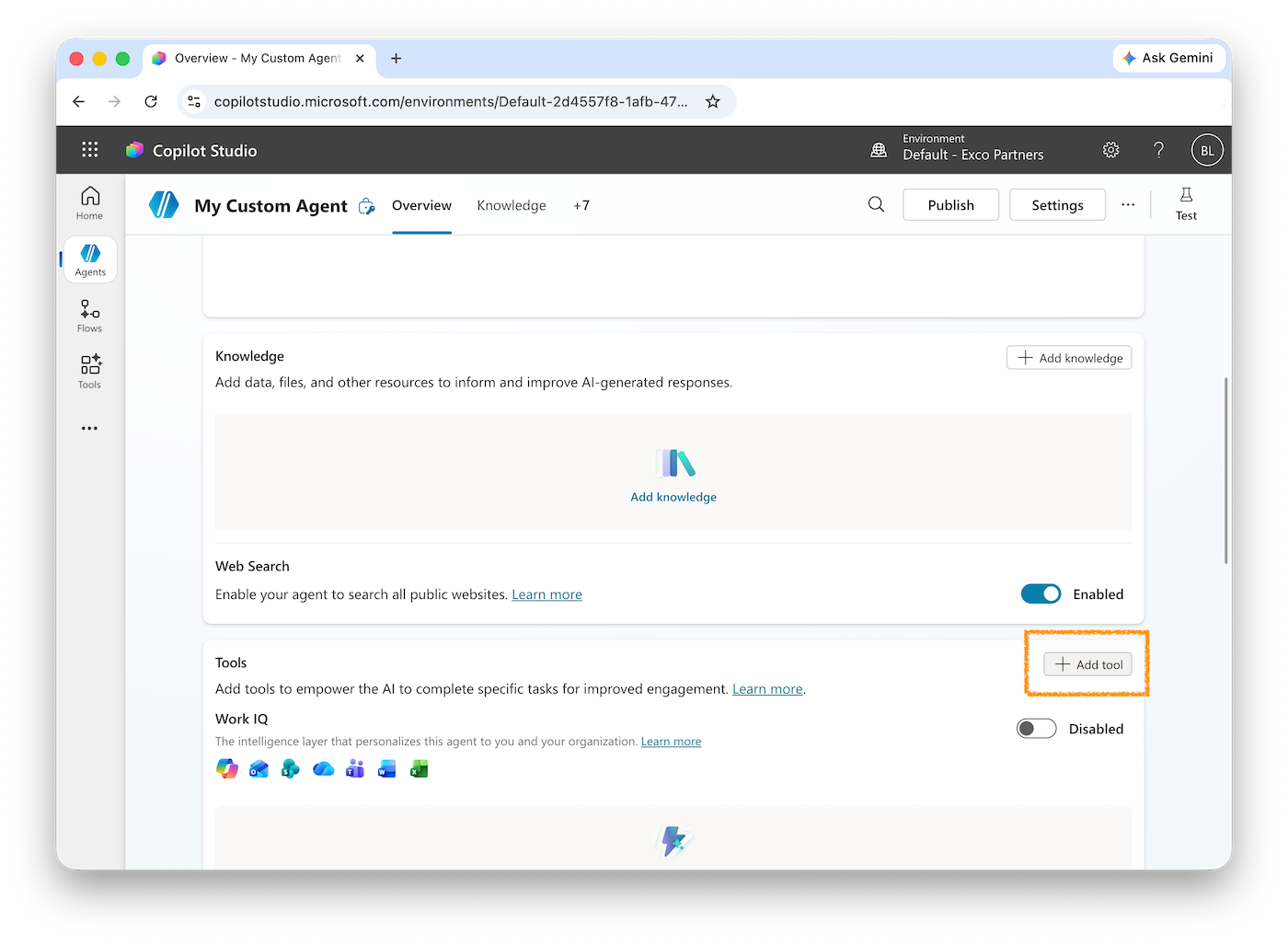

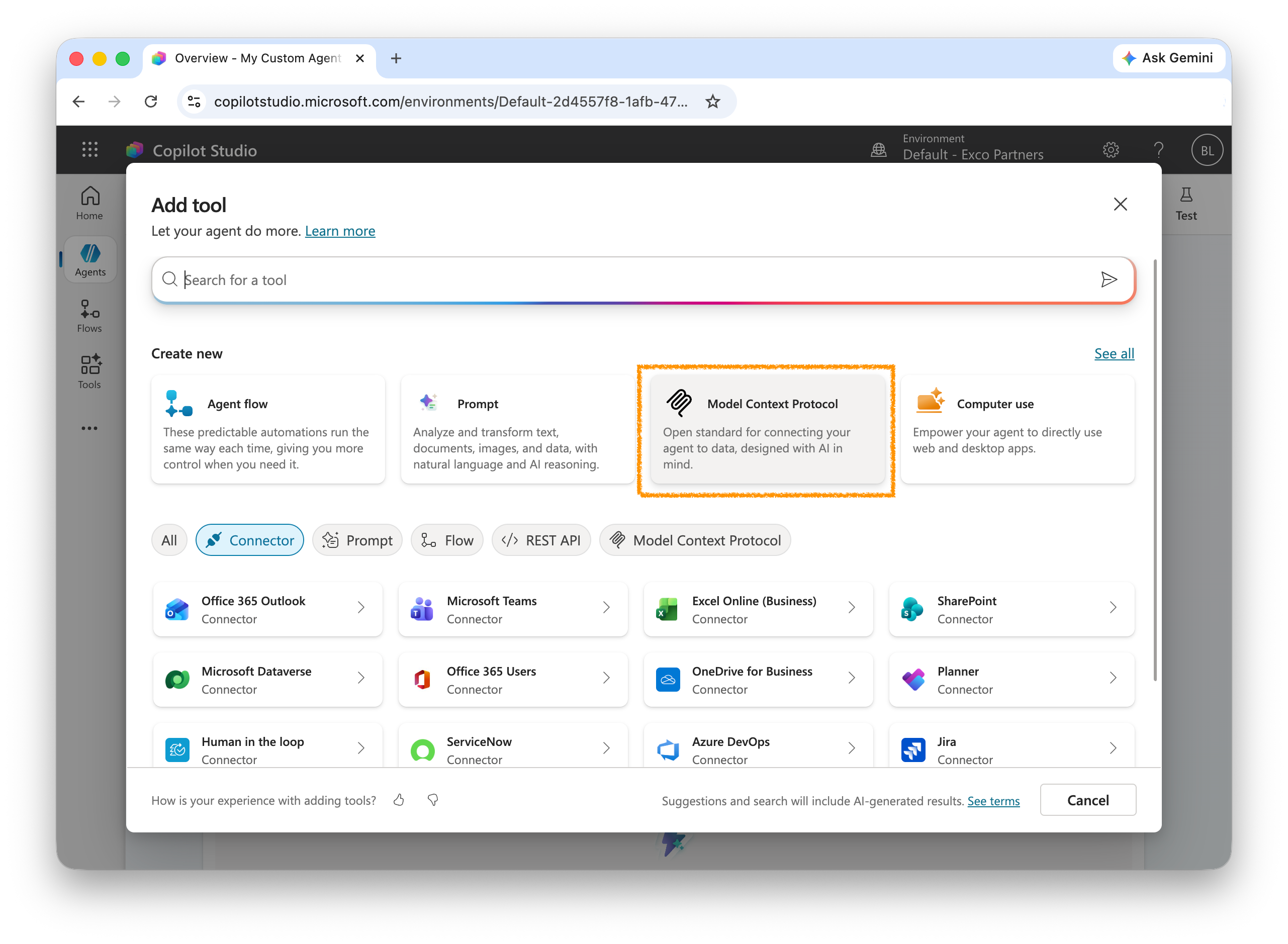

You can easily add an MCP from this site to your custom Copilot Agents.

The following example shows how to build a minimal agent using the Anthropic Claude SDK that connects to the Exco Partners CoreMethods MCP server via AI Front Door. CoreMethods exposes a curated set of decision-support tools for common service delivery scenarios.

npm install @anthropic-ai/sdk @anthropic-ai/mcp-clientimport Anthropic from "@anthropic-ai/sdk";

import { MCPClient } from "@anthropic-ai/mcp-client";

const AI_FRONT_DOOR_TOKEN = process.env.AIFD_TOKEN!;

// 1. Connect to the AI Front Door MCP server (CoreMethods is exposed here)

const mcpClient = new MCPClient({

transport: "http",

url: "https://api.aifrontdoor.com/mcp",

headers: {

Authorization: `Bearer ${AI_FRONT_DOOR_TOKEN}`,

},

});

await mcpClient.connect();

// 2. Fetch available tools from MCP

const { tools } = await mcpClient.listTools();

// 3. Create the Anthropic client

const anthropic = new Anthropic();

// 4. Run the agent loop

async function runAgent(userMessage: string) {

const messages: Anthropic.MessageParam[] = [

{ role: "user", content: userMessage },

];

while (true) {

const response = await anthropic.messages.create({

model: "claude-sonnet-4-6",

max_tokens: 4096,

tools, // pass MCP tools directly to Claude

messages,

});

// If Claude wants to call a tool, execute it via MCP

if (response.stop_reason === "tool_use") {

const toolResults: Anthropic.MessageParam = {

role: "user",

content: [],

};

for (const block of response.content) {

if (block.type === "tool_use") {

console.log(`Calling tool: ${block.name}`, block.input);

const result = await mcpClient.callTool({

name: block.name,

arguments: block.input as Record<string, unknown>,

});

(toolResults.content as Anthropic.ToolResultBlockParam[]).push({

type: "tool_result",

tool_use_id: block.id,

content: JSON.stringify(result),

});

}

}

messages.push({ role: "assistant", content: response.content });

messages.push(toolResults);

continue;

}

// Final text response

const textBlock = response.content.find((b) => b.type === "text");

return textBlock?.type === "text" ? textBlock.text : "";

}

}

// Example usage

const answer = await runAgent(

"What are the eligibility requirements for unfair dismissal claims?"

);

console.log(answer);

await mcpClient.disconnect();# .env

AIFD_TOKEN=eyJhbGciOiJSUzI1NiIsInR5cCI6IkpXVCJ9...

ANTHROPIC_API_KEY=sk-ant-...Knowledge resources are also available over a plain REST API. This is useful when you want to retrieve guidance, policy documents, or reference data outside of an agent context — for example, to pre-populate RAG pipelines or build search interfaces.

curl https://api.aifrontdoor.com/api/knowledge \

-H "Authorization: Bearer YOUR_BEARER_TOKEN"curl https://api.aifrontdoor.com/api/knowledge/unfair-dismissal-eligibility \

-H "Authorization: Bearer YOUR_BEARER_TOKEN"async function getKnowledge(slug: string, token: string) {

const res = await fetch(

`https://api.aifrontdoor.com/api/knowledge/${slug}`,

{ headers: { Authorization: `Bearer ${token}` } }

);

if (!res.ok) throw new Error(`Knowledge fetch failed: ${res.status}`);

return res.json();

}

const item = await getKnowledge("unfair-dismissal-eligibility", token);

console.log(item.title, item.summary);If you have a general-purpose agent that needs domain expertise it doesn't have, you can delegate to the AI Front Door domain agent using the A2A protocol. Your agent POSTs a task to the A2A endpoint and receives a structured result.

curl -X POST https://api.aifrontdoor.com/a2a/tasks \

-H "Authorization: Bearer YOUR_BEARER_TOKEN" \

-H "Content-Type: application/json" \

-d '{

"task": "Summarise the general protections process for a small business employer",

"context": {

"userRole": "employer",

"employeeCount": 12

}

}'{

"taskId": "task_01J...",

"status": "completed",

"result": {

"summary": "Under the general protections provisions...",

"sources": [

{ "title": "General Protections Guide", "slug": "general-protections-guide" }

]

}

}Scopes are granted when you register your app. Common scopes are: tools:read (call tools), knowledge:read (retrieve knowledge resources), a2a:write (submit A2A tasks). Request only the scopes your app needs.

Bearer tokens expire after 3600 seconds (1 hour) by default. Implement token refresh in your application by re-requesting a token when you receive a 401 response.

Yes. The MCP server implements the standard Model Context Protocol (2025-03-26 spec) and is compatible with any MCP-capable runtime, including OpenAI's Agents SDK, LangChain, and open-source MCP clients.

Rate limits are enforced per application registration. Default limits are 60 tool calls per minute and 1000 per hour. Contact us if your use case requires higher throughput.

CoreMethods tool definitions are published on the Tools page of this portal. Each tool includes its input schema, example payloads, and authentication requirements. You can also discover them at runtime via the MCP tools/list endpoint.